David Tudor was a pianist and experimental music composer who pioneered the creation of live electronic music in the mid-1960s. He performed many early works by John Cage, Christian Wolff, Morton Feldman, Earle Brown, Karlheinz Stockhausen, Stefan Wolpe, and La Monte Young. Many of these composers wrote pieces expressly for Tudor and he worked closely with Cage to develop many compositions. He became the pianist for the Merce Cunningham Dance Company and he and John Cage toured during the 1950s and 1960s with programs of Cage’s works.

Neural Synthesis

In 1989 he met technical writer and designer Forrest Warthman after a show at Berkeley who introduced him to the idea of using analogue neural networks to combine all his complex live-electronics into one computer. One result is the CD “Neural Synthesis” (Track 6 in the video) in which Warthman writes in the liner notes:

the role of learner, pattern-recognizer and responder is played by David, himself a vastly more complex neural network than the chip

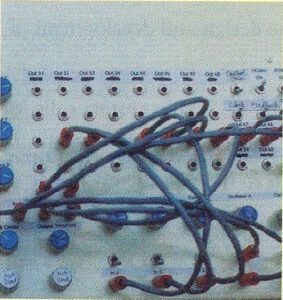

“The concept for the neural-network synthesizer grew out of a collaborative effort that began in 1989 at Berkeley where David was performing with the Merce Cunningham Dance Company. I listened from the front row as David moved among interconnected electronic devices that filled two tables. He created a stream of remarkable sounds, overlaid them, filtered them, and fed them back upon themselves until the stream became a river. His attention moved from device to device, tasting and adjusting the mixture of sound like a chef composing a fine sauce of the most aromatic ingredients. I was spellbound. At the intermission I proposed to him that we create a computer system capable of enveloping and integrating the sounds of his performances.

[…]

The unfolding of our ideas was an adventure in discovery and like all real adventures it led to unexpected places. What began as an effort to integrate the proliferation of electronic devices in David’s performance environment ended in the addition of yet another device. In an early phase, Ron Clapman of Bell Labs and I explored designs using PBXs and pen-on-screen interfaces to switch a broadband matrix of analog and digital signals, including not only audio but video and performance-hall control signals. This led us to think about parallel processors with rich feedback paths.

In 1990, the project took a fundamental turn when I met Mark Holler from Intel at a computer architecture conference near Carmel, California. Mark was introducing a new analog neural-network microchip whose design he had recently managed. The chip electronically emulates neuron cells in our brains and can process many analog signals in parallel. It seemed a perfect basis for an audio-signal router, so I approached Mark with the idea during a walk along the Pacific coast beach. He thought it could work, offered a chip for experimentation, and we struck up an immediate friendship. Soon after, another colleague, Mark Thorson, joined us to work out the first hardware design for what was to become not only a signal router but also the analog audio synthesizer that David uses in this performance. Throughout our research and development, David provided a guiding light. His artistic intuition and his experience with electronic performance helped shape our thinking at each step.

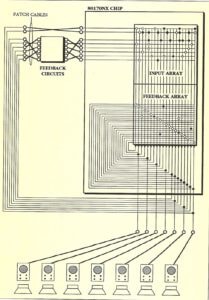

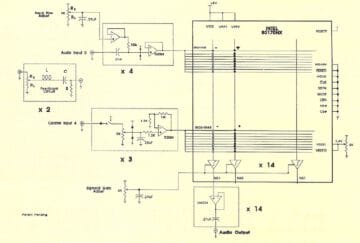

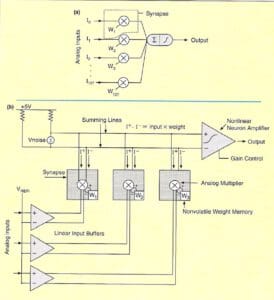

The neural-network chip forms the heart of the synthesizer. It consists of 64 non-linear amplifiers (the electronic neurons on the chip) with 10240 programmable connections. Any input signal can be connected to any neuron, the output of which can be fed back to any input via on-chip or off-chip paths, each with variable connection strength. The same floating-gate devices used in EEPROMs (electrically erasable, programmable, read-only memories) are used in an analog mode of operation to store the strengths of the connections. The synthesizer adds R-C (resistance-capacitance) tank circuits on feedback paths for 16 of the 64 neurons to control the frequencies of oscillation. The R-C circuits produce relaxation oscillations. Interconnecting many relaxation oscillators rapidly produces complex sounds. Global gain and bias signals on the chip control the relative amplitudes of neuron oscillations. Near the onset of oscillation the neurons are sensitive to inherent thermal noise produced by random motions of electron groups moving through the monolithic silicon lattice. This thermal noise adds unpredictability to the synthesizer’s outputs, something David found especially appealing.

The synthesizer’s performance console controls the neural-network chip, R-C circuits, external feedback paths and output channels. The chip itself is not used to its full potential in this first synthesizer. It generates sound and routes signals but the role of learner, pattern-recognizer and responder is played by David, himself a vastly more complex neural network than the chip. During performances David chooses from up to 14 channels of synthesizer output, modifying each of them with his other electronic devices to create the final signals. He debuted the synthesizer at the Paris Opera (Garnier) in November 1992 in performances of Enter, with the Merce Cunningham Dance Company. In the 1994 recording for this CD, made during later experiments with the synthesizer in Banff, four discrete channels representing 12 recorded tracks are mixed to stereo.

Audio oscillators have a subtle but long history in Silicon Valley. Many local engineers date the beginning of the semiconductor industry to the 1938 development by Bill Hewlett and David Packard of their first product, an audio oscillator, in a downtown Palo Alto garage just a few blocks from the studio in which this synthesizer was built. Our work in 1993 and 1994 with David Tudor has led to the development, principally by Mark Holler, of a second-generation synthesizer built around multiple neural-network chips. It is a hybrid system using the analog neural-network chips to produce audio waveforms and a small digital computer to control neuron interconnections. The analog waveforms have frequency components ranging up to 100kHz and are much more complex than can be produced by a digital computer in real time. The performer will use the computer to change tens of thousands of interneuron connections during performance. The space of possible interneuron configurations is so large that it is difficult to reproduce the behavior of the synthesizer, which can evolve sound on its own over time. Searching for the regions of configurations that produce captivating sound becomes the challenge. It is possible that, with further development, the computer may be useful in finding these regions. Until then, there is no substitute for David.

[…]”

The Chip

The chip used here is the Intel 80170NX Neural Processor aka ETANN. ETANN (Electronically Trainable Analog Neural Network) was one of the first commercial neural processor, introduced by Intel around 1989. Implemented on a 1.0 µm process, this chip incorporated 64 analog neurons and 10,240 analog synapses. The ETANN is also the first commercial analog neural processor and is considered to be the first successful commercial neural network chip.

In 1993, Forest Warthman wrote an article about the synthesizer in Dr. Dobbs journal, to be read here as pdf: Dr. Dobbs – A Neural-Network Audio Synthesizer.

Software-defined analog

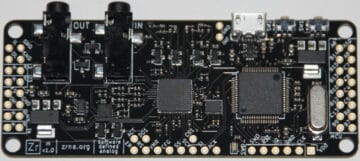

I think it could be an interesting approach to attempt to recreate and further develop these early analog neural networks on modern software-defined analog hardware like this by znra.

N.B.: the featured image is upscaled with an AI-equipment service, letsenhance.io. It’s beautiful how it rendered Spock ears to David Tudor.